02 Feb

Overview

For the last few months at work, I’ve been involved in quite a lot of data analysis work. Using monitoring tools such as Splunk the business has identified that a small portion of web and mobile users trying to access certain parts of their account details have been getting either extremely slow responses, or the calls are simply failing.

Between 07:00 – 22:00, my team’s platform generally receives between 160,000 and 269,000 user API requests per hour, the general details of these requests are saved in a transactional database table and the full details of what was requested by the user and what data we return are stored in plaintext logfiles, this translates into an average 1 million lines of log text per hour (more on that later).

The API calls made by the user can consist of calls into multiple different platform teams across the business.

https://mydomain.com/api/v1/userStuff/UserRef?embed=platformTeam1details, platformTeam1details , platformTeam2details, platformTeam3details &date1=2022-01-12&date2=2022-01-12

(An example request)

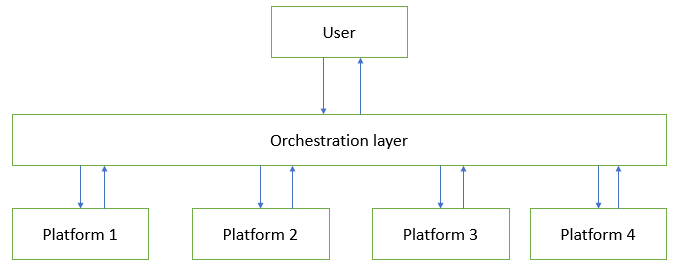

This requires an orchestration layer to manage the routing and sequencing of requests. Some requests will be dependent on the return values of prior requests so keeping track of everything is important.

A diagram of how a request gets to us. The reality involves many more layers and connections, but this simplified view should suffice for now.

The problem

My platform didn’t write these API’s. We don’t have any documentation on the specific business logics they are making requests to, and unfortunately neither do the people in the business who are raising the tickets asking us to investigate the slow response times. All that both sides seemingly can do right now is share time frames when we know the requests were run and when responses were returned (or the service timed out), and scour the log files at both ends to try and make sense of what’s going on. I know, I know this seems like a really rubbish way of doing things, and I don’t disagree but if I don’t move on quickly, I might start crying.

My initial approach

The largest issue in terms of the numbers of users affected is API requests timing out after 30 seconds. This timeout period is hardcoded by the business calling us, our platform lets requests run for up to 60 seconds before timing out, which increases the number of log file rows I must search though, awesome.

The way I’m receiving the work is via updates on a JIRA ticket which lists specific API requests and timestamps of when the orchestration layer received them, and some “educated” guesses at what business logics they are calling into my team for.

So firstly, I’m going to the transactional database, and searching the timeframes the calls were made. Then I’m trying to identify the relevant records and business logics being called based on the genericised message requests and response payloads. The data I’m really interested in is the text logfiles, but I’ve got to start with the transactional DB to identify which AWS machines handled the requests, this, in turn, tells me which logfiles to look through. Due to the number of API requests we receive, we have to split logging between four log files per day (all being simultaneously written to), each of which averages at 1GB in size by the end of the day.

Working with these log files is tricky, tools like notepad & notepad++ hang and crash if you try to open them and I don’t have the ability (due to corporate policy) to download and install any applications that are built specifically for opening large logfiles. I do however have Visual Studio Code installed which I quickly discovered can load these log files…eventually. But I found searching through them using VSC is not acceptable due to the lag and frequent crashes.

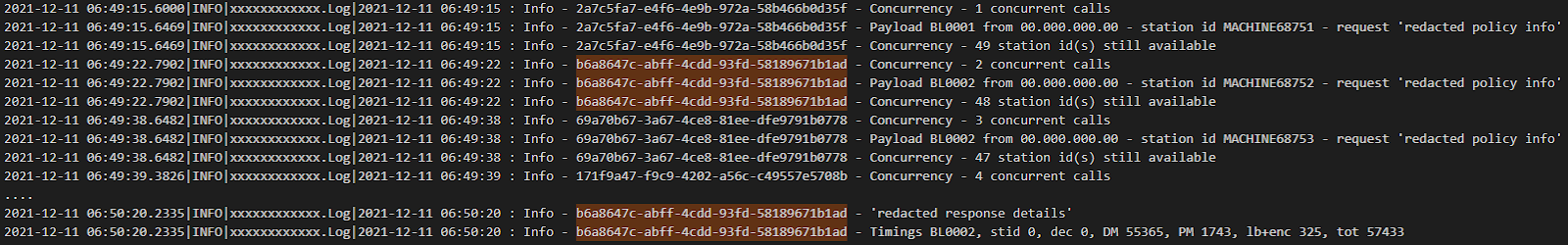

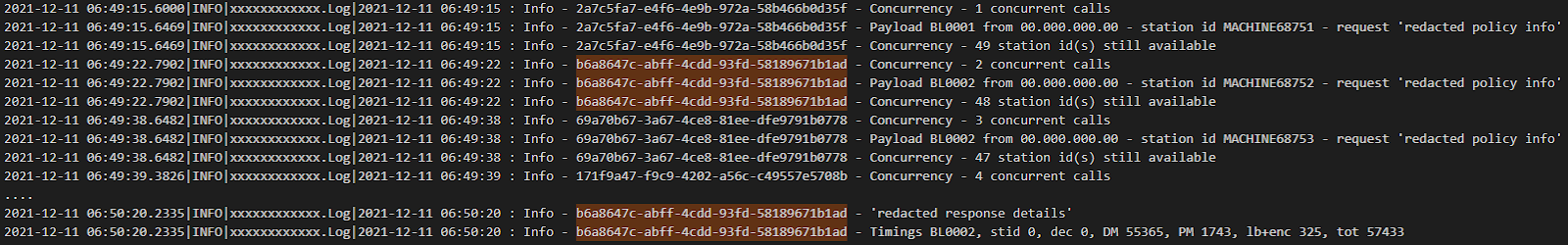

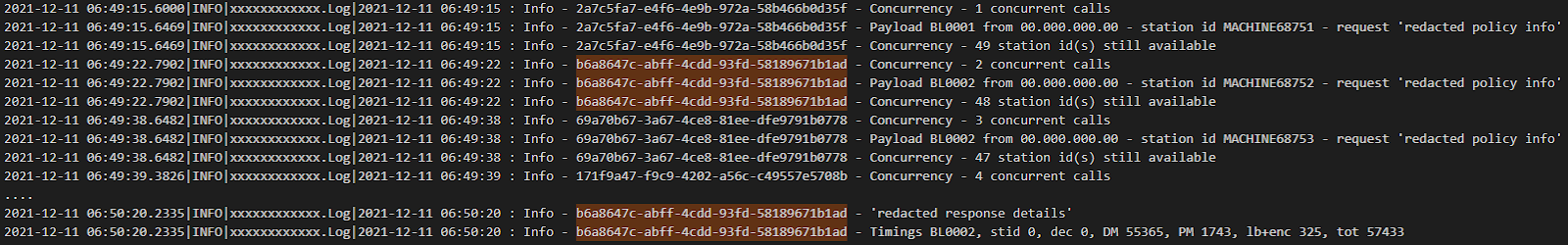

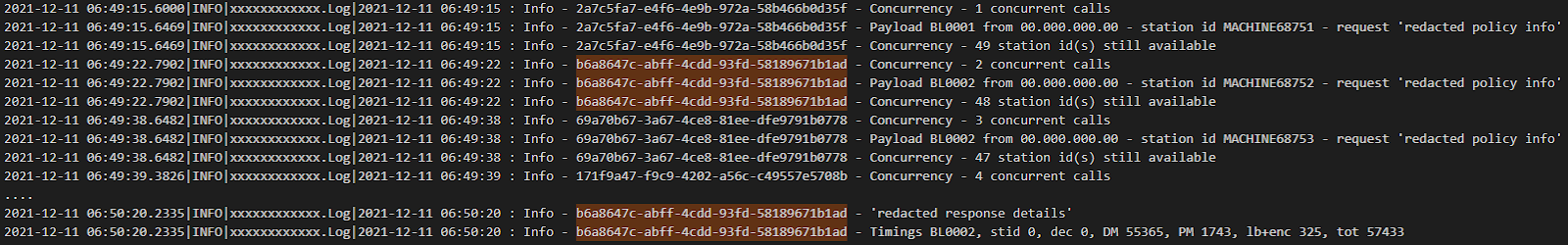

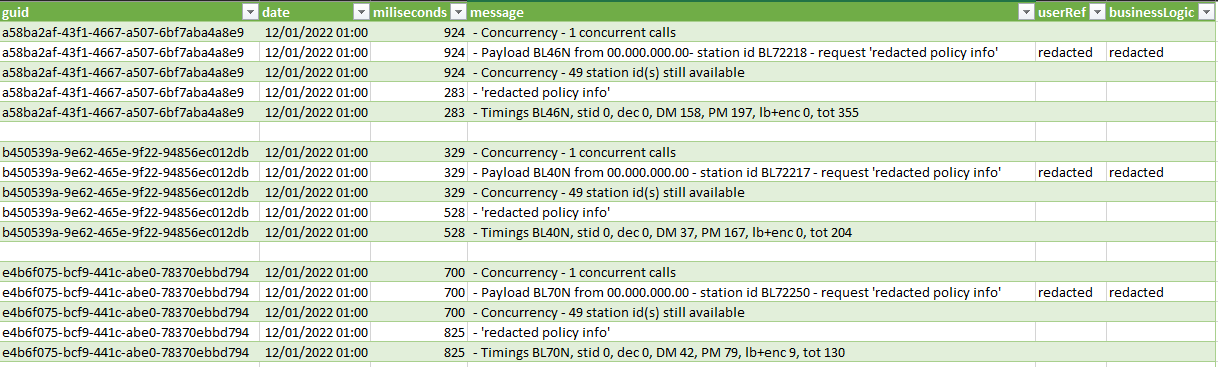

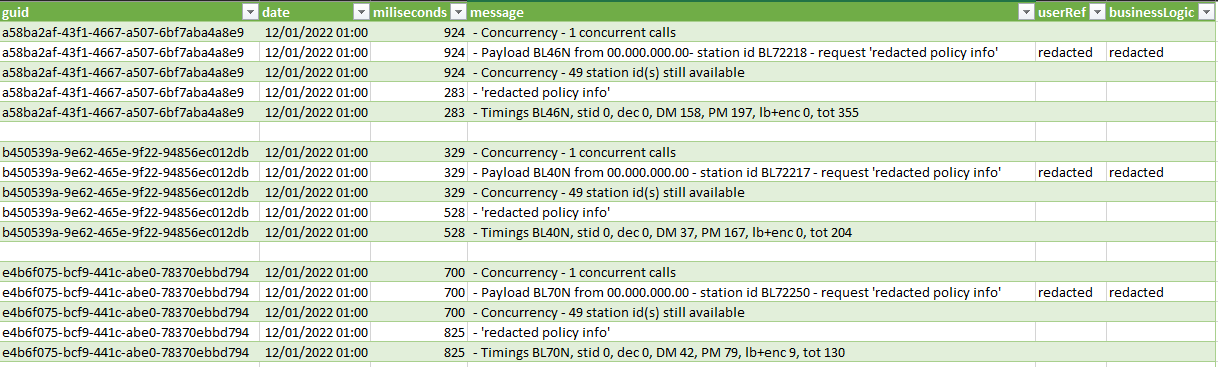

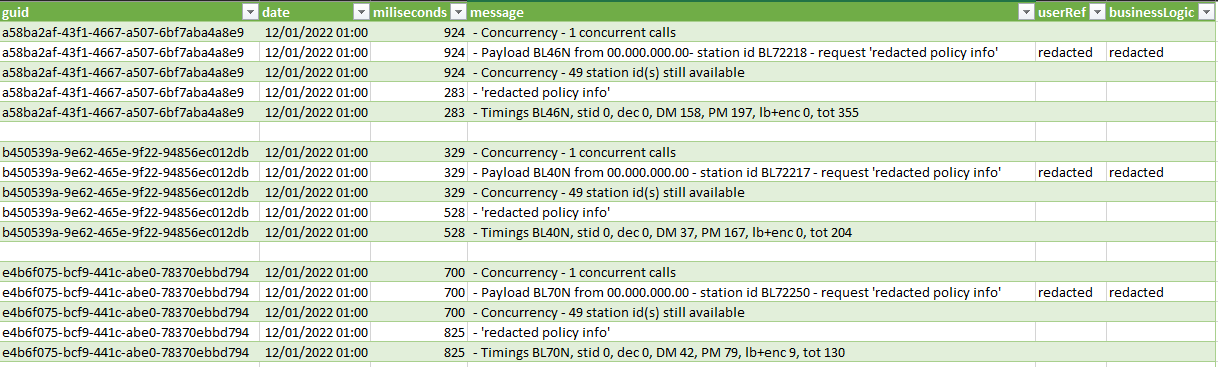

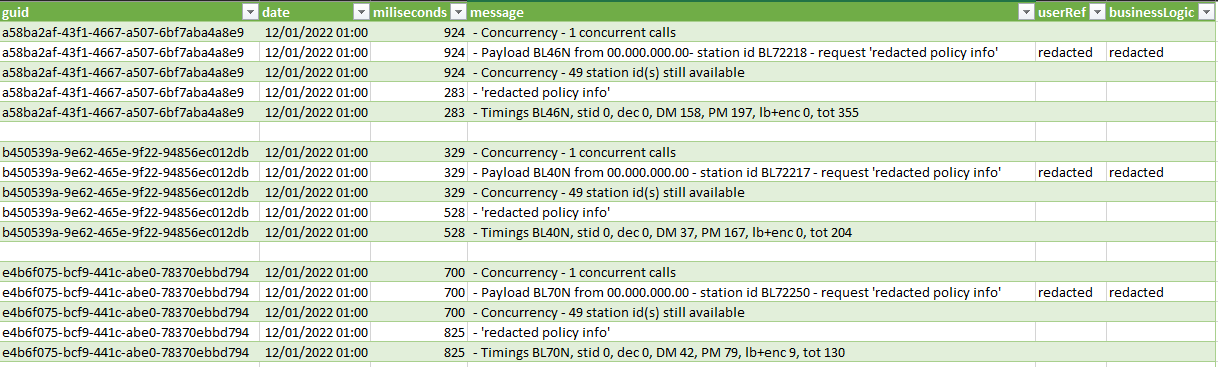

However, being able to open them gave me insight into how the data was logged and opened other possibilities. I could see that each API request had 5 different entries in the log file,

- 1st – How many calls are currently processing

- 2nd – Includes the request body (includes user refence data and which BL is being called)

- 3rd – how many requests can be processed

- 4th – The response body

- 5th – Total response time in milliseconds

All lines are date stamped and correlated with a guid and include lots of other data such as log level classification etc.

Example of some log data, all sensitive user and company data has been obfuscated or redetected.

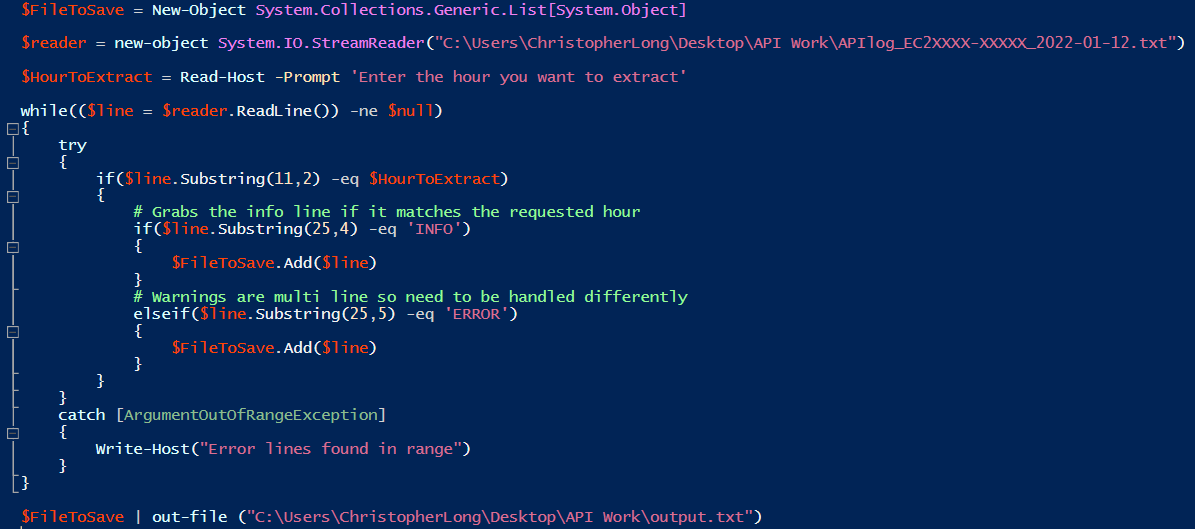

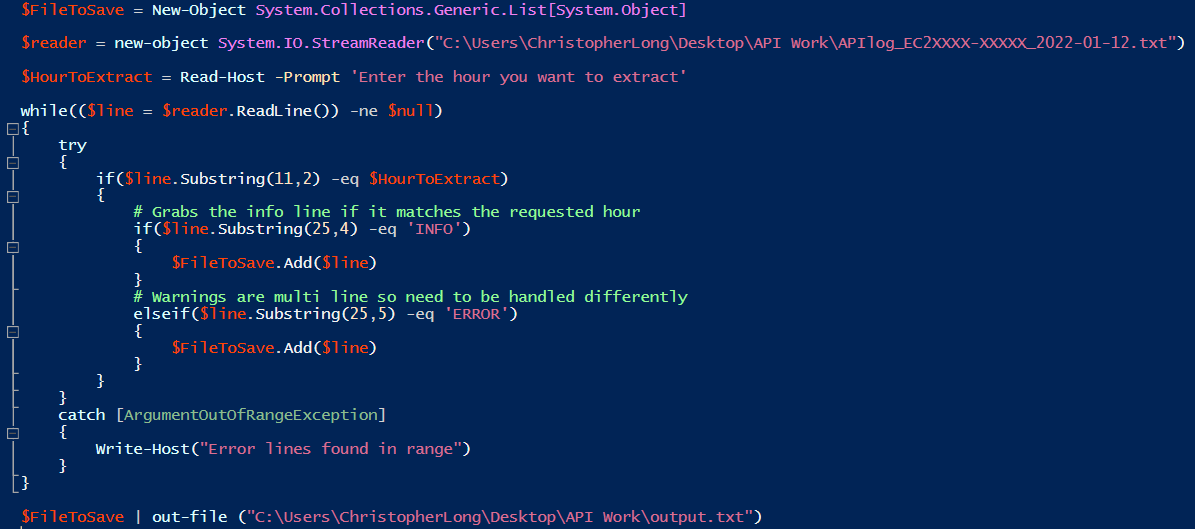

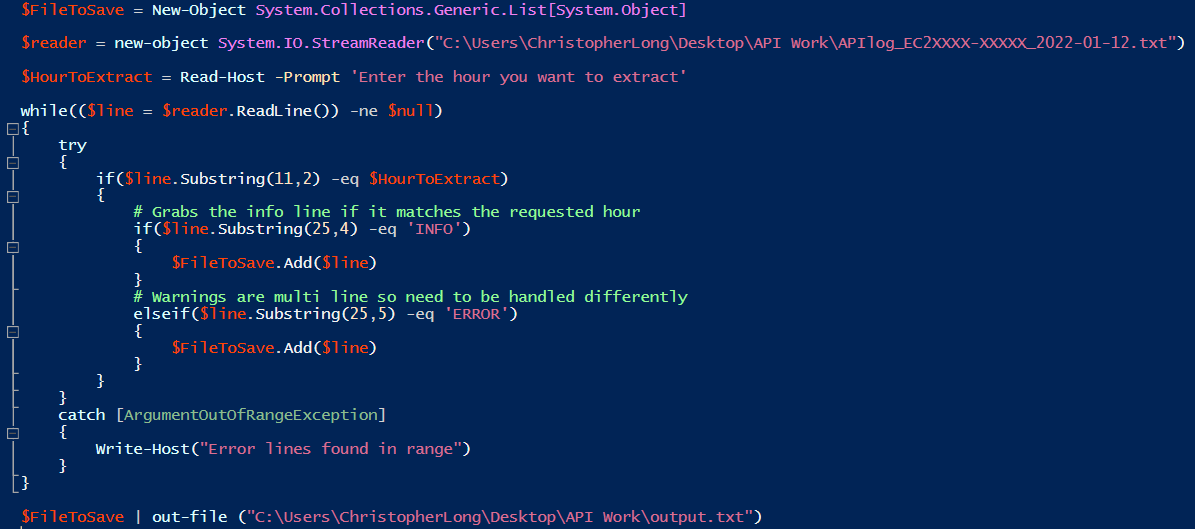

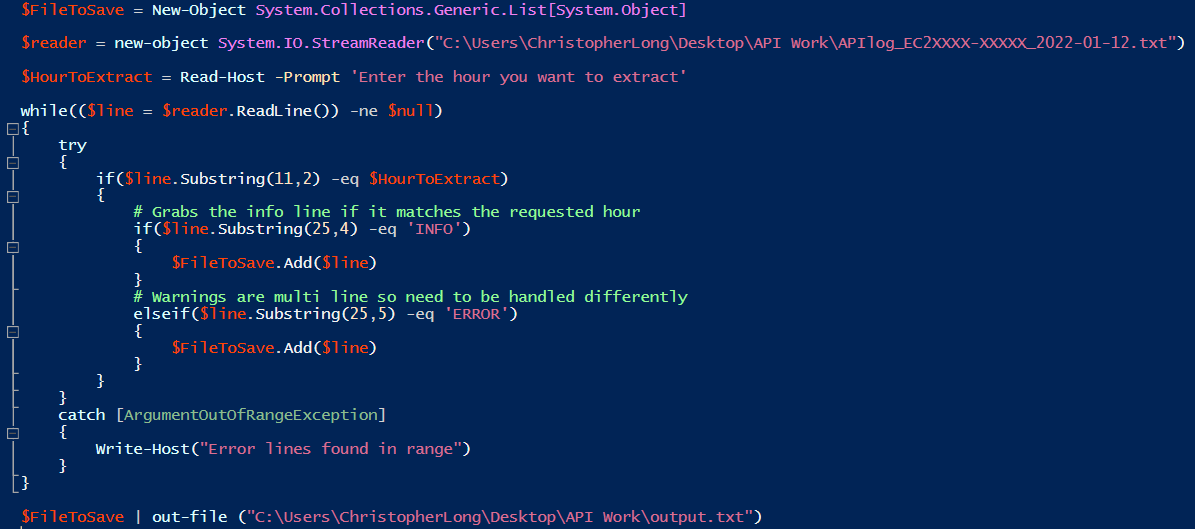

Understanding the line format allowed me to quickly put together a CLI based PowerShell script to export the lines in the relevant timeframe to a much smaller file which I could open and browse through.

This gave me a workflow to start investigating with, but it still required A LOT of manual data collation. I had to do this for each of the 4 logfiles and piece together the API sequences across them based on the timestamps.

I started thinking of ways to speed this up, ideally, I needed to be able to interrogate each second log line (of a correlation group), get the user reference data if it’s what I’m looking for, return all the other 4 related lines as well and save them somewhere. This is something I wasn’t sure I had the PowerShell skills for, but I was pretty sure it would be simple enough in C#, a language I’m much more familiar with.

Writing the app

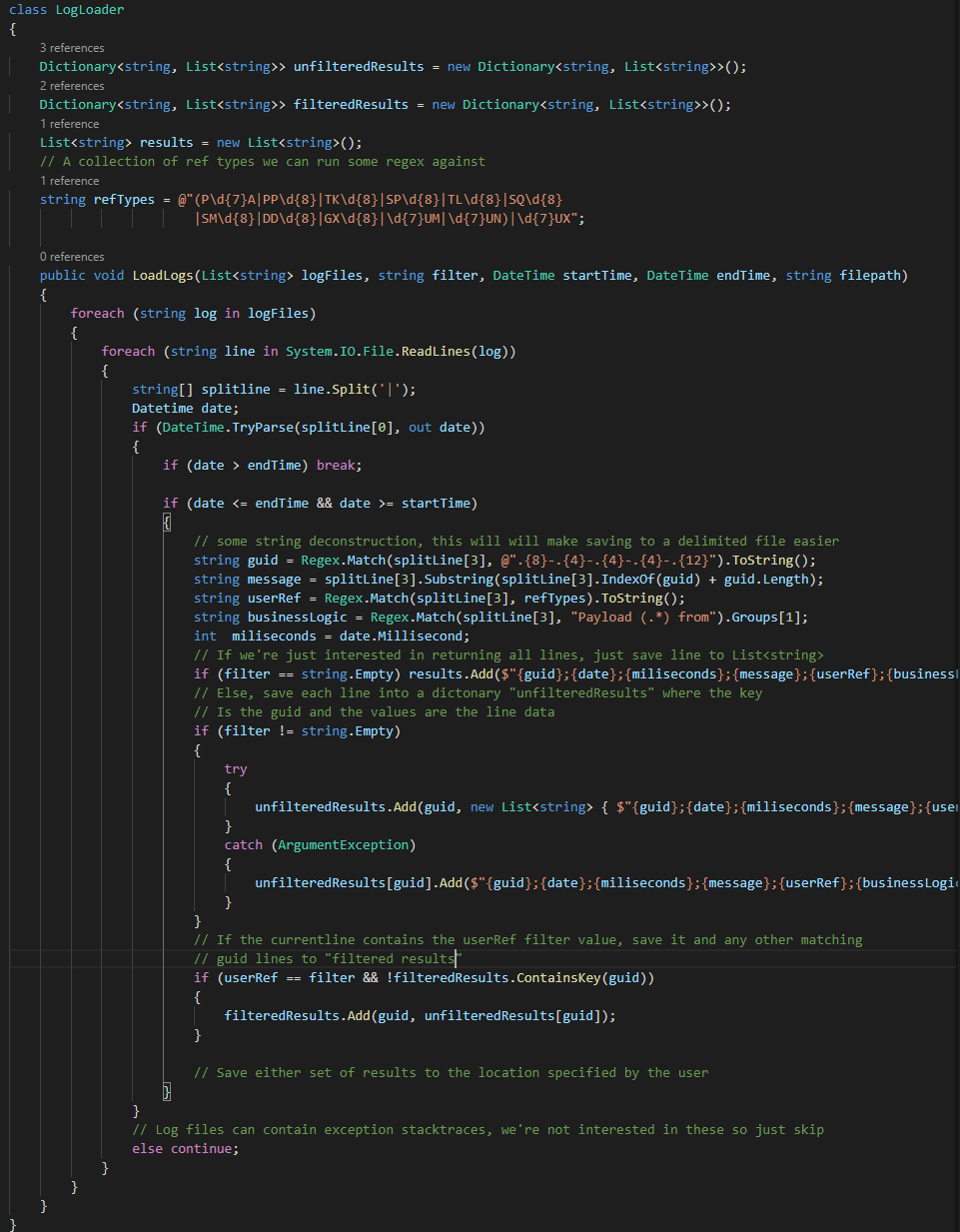

I ended up thinking of two use cases for my C# app:

- I want all the data from the logs between two points in time – This is useful for other types of investigation I’m not going into in this post.

- I want only the data that makes reference to a filter value I provide, such as a user reference string.

And I want to save to a CSV type file for easier post-search analysis using something like excel (I ♥ Power query).

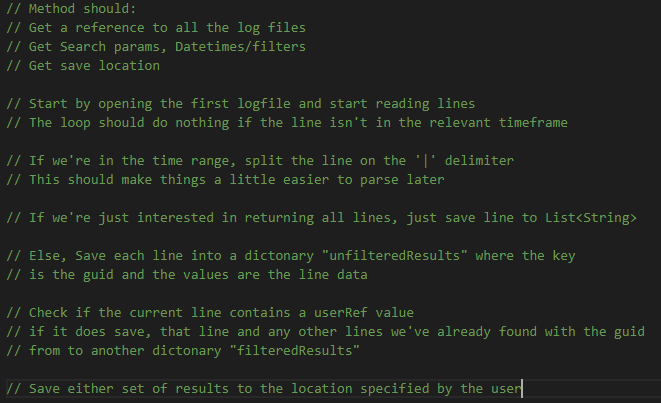

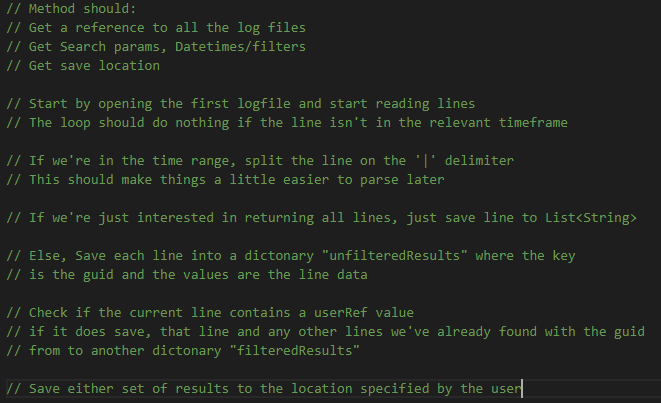

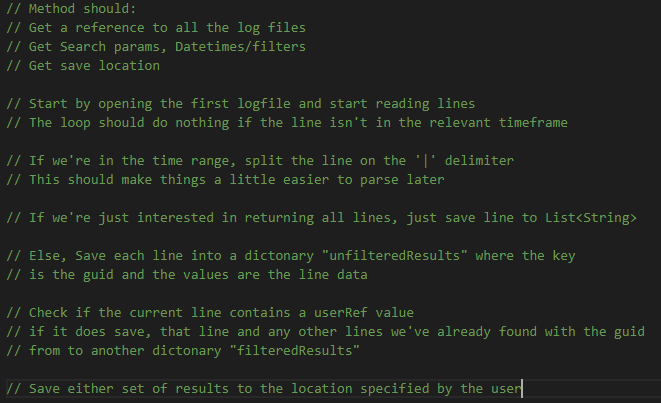

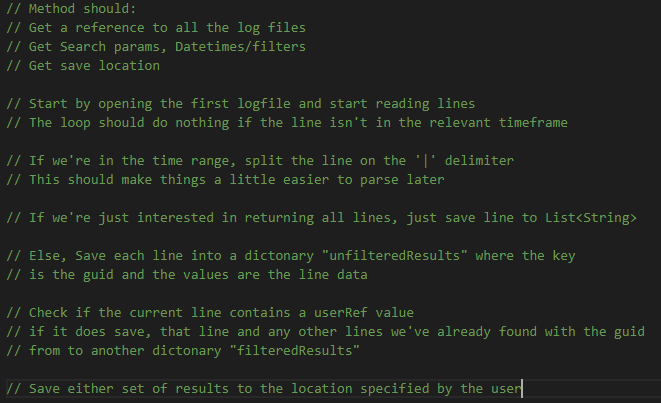

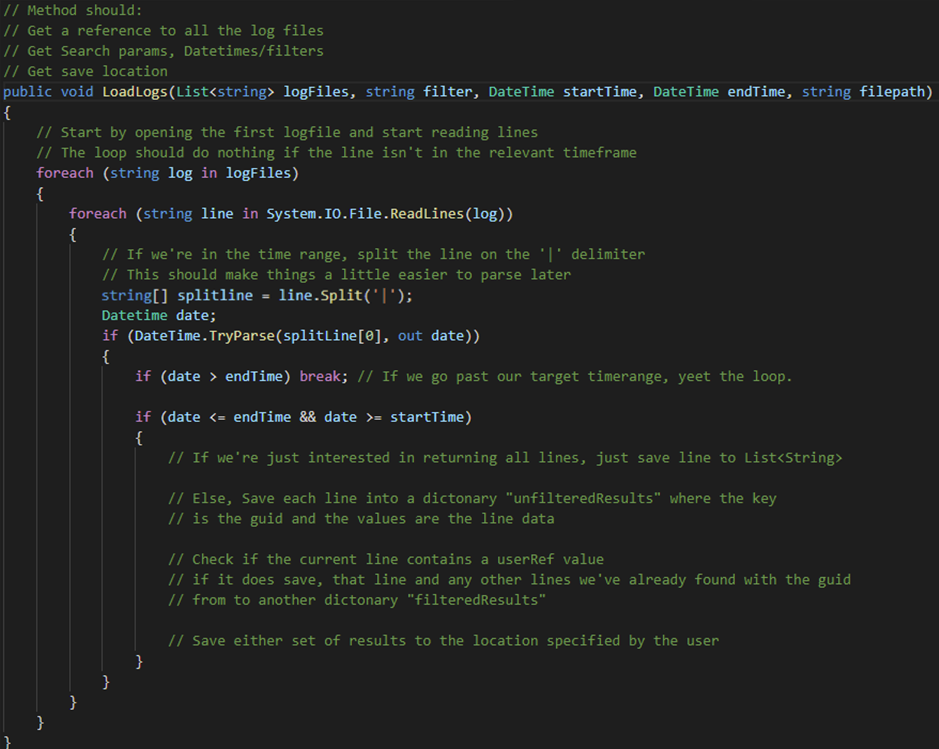

When starting a new project, I like to write out the main tasks I want the program to achieve, inside my code editor in a logical order and then start building out a code skeleton, like so:

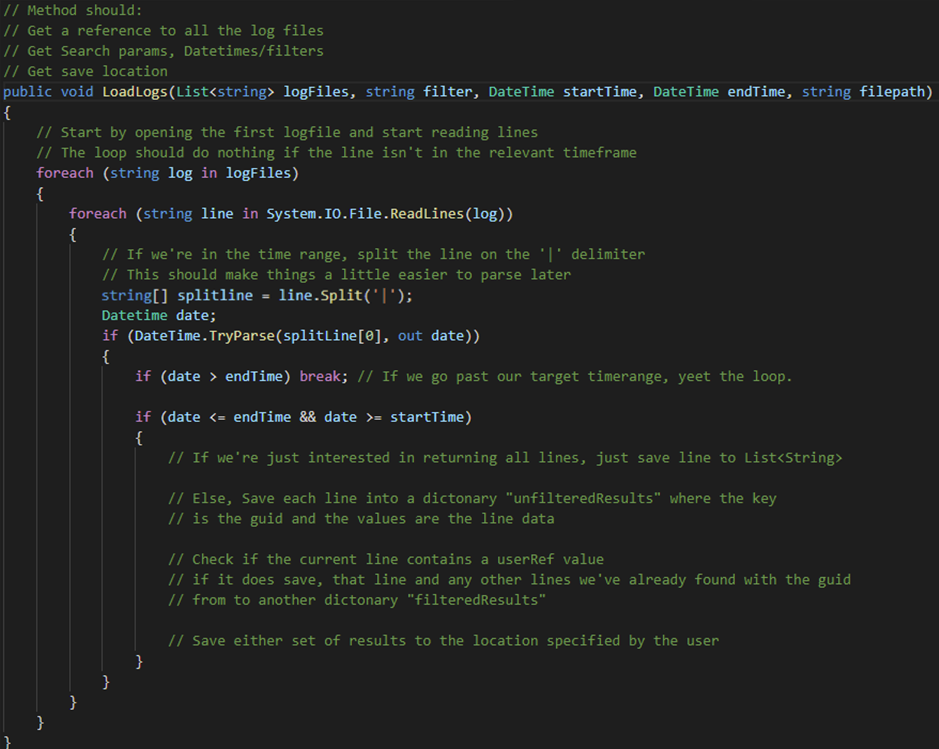

So first I set up the main method, I use my notes to structure the code. I’ll clean them up later.

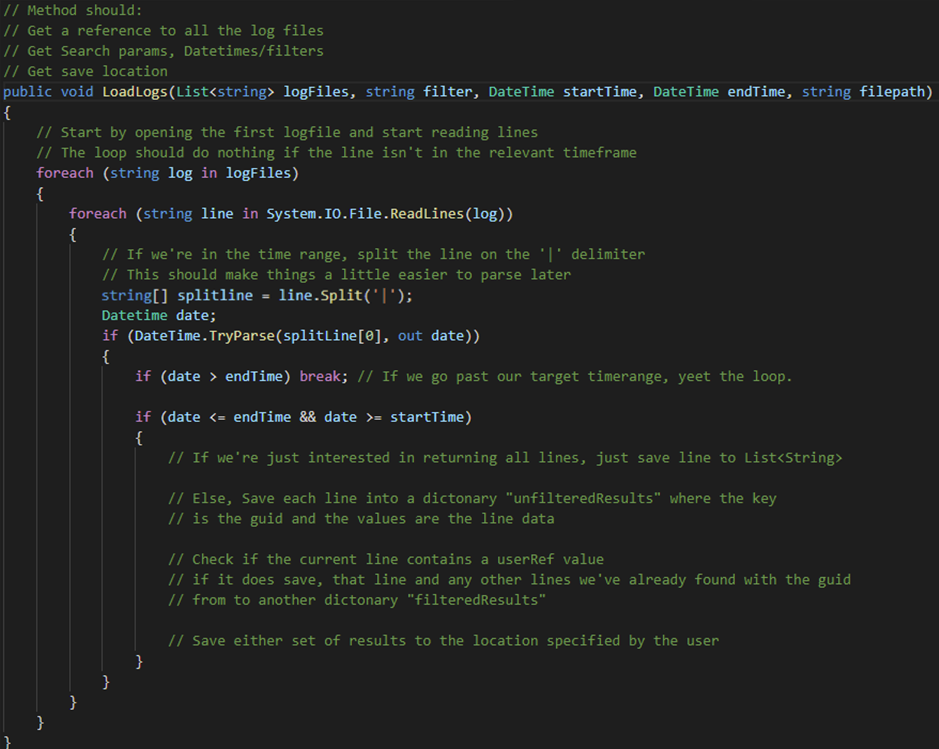

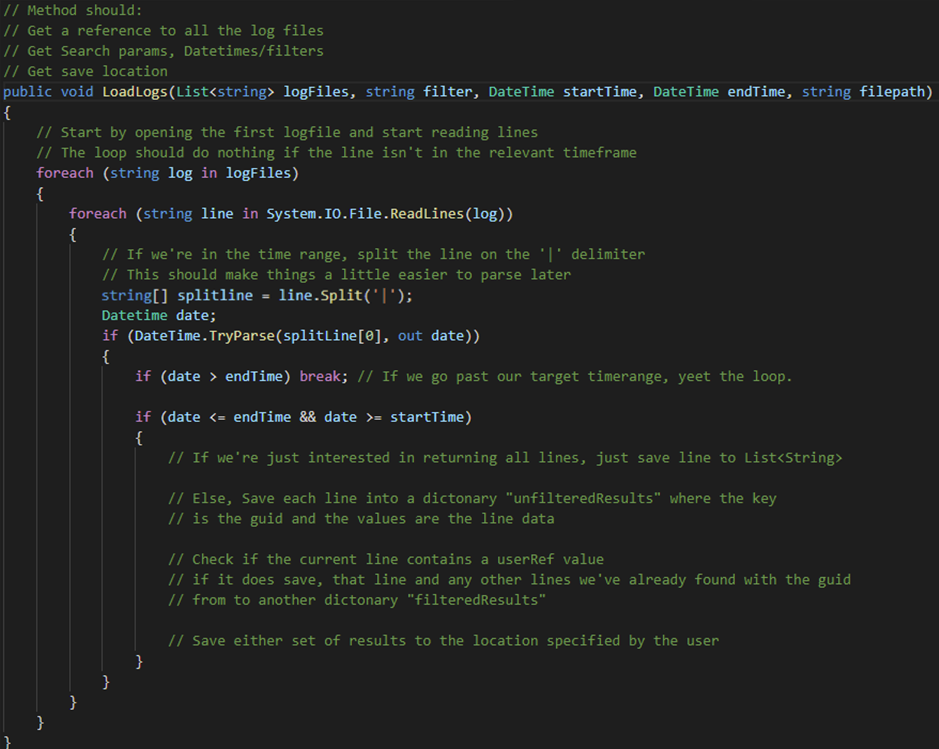

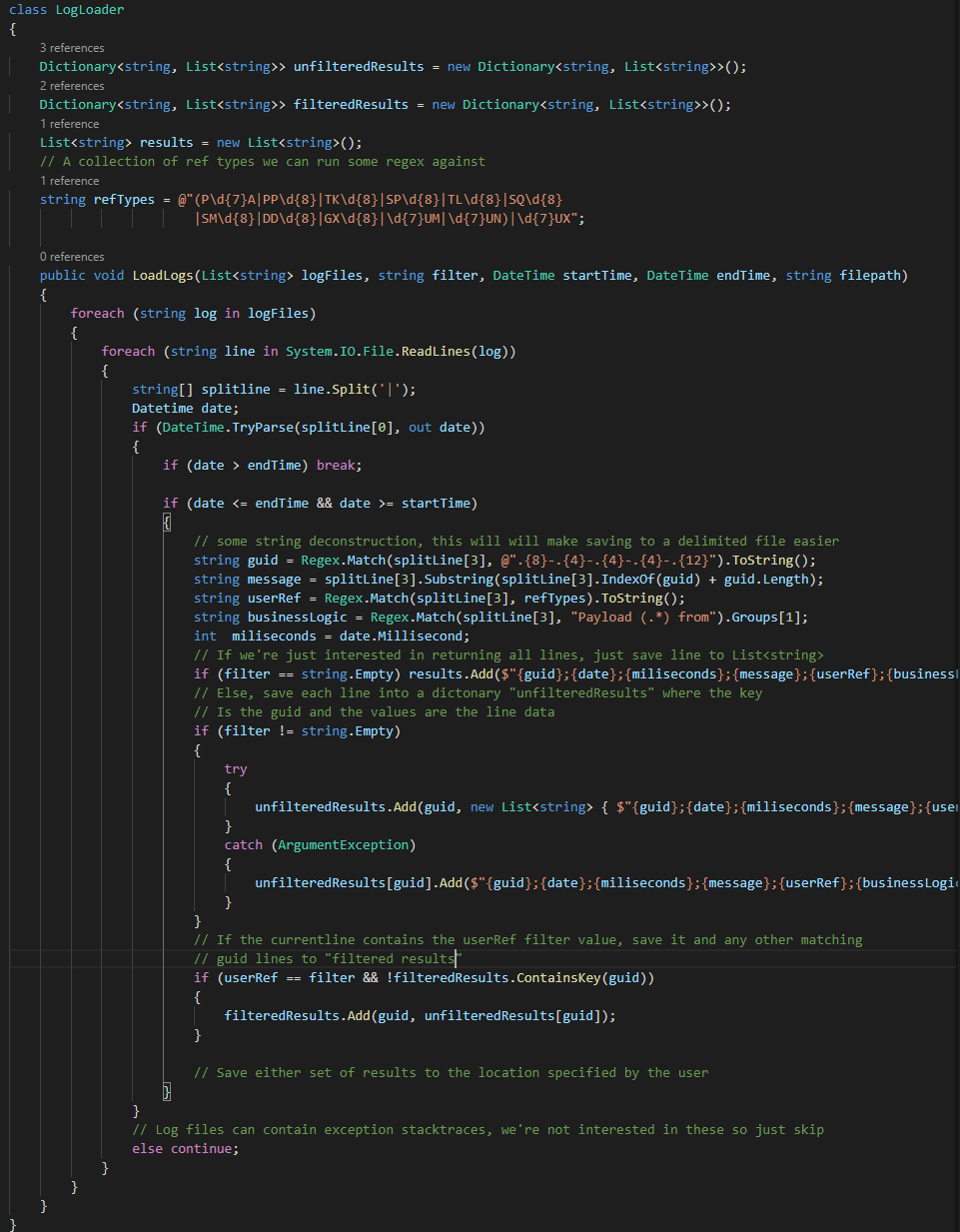

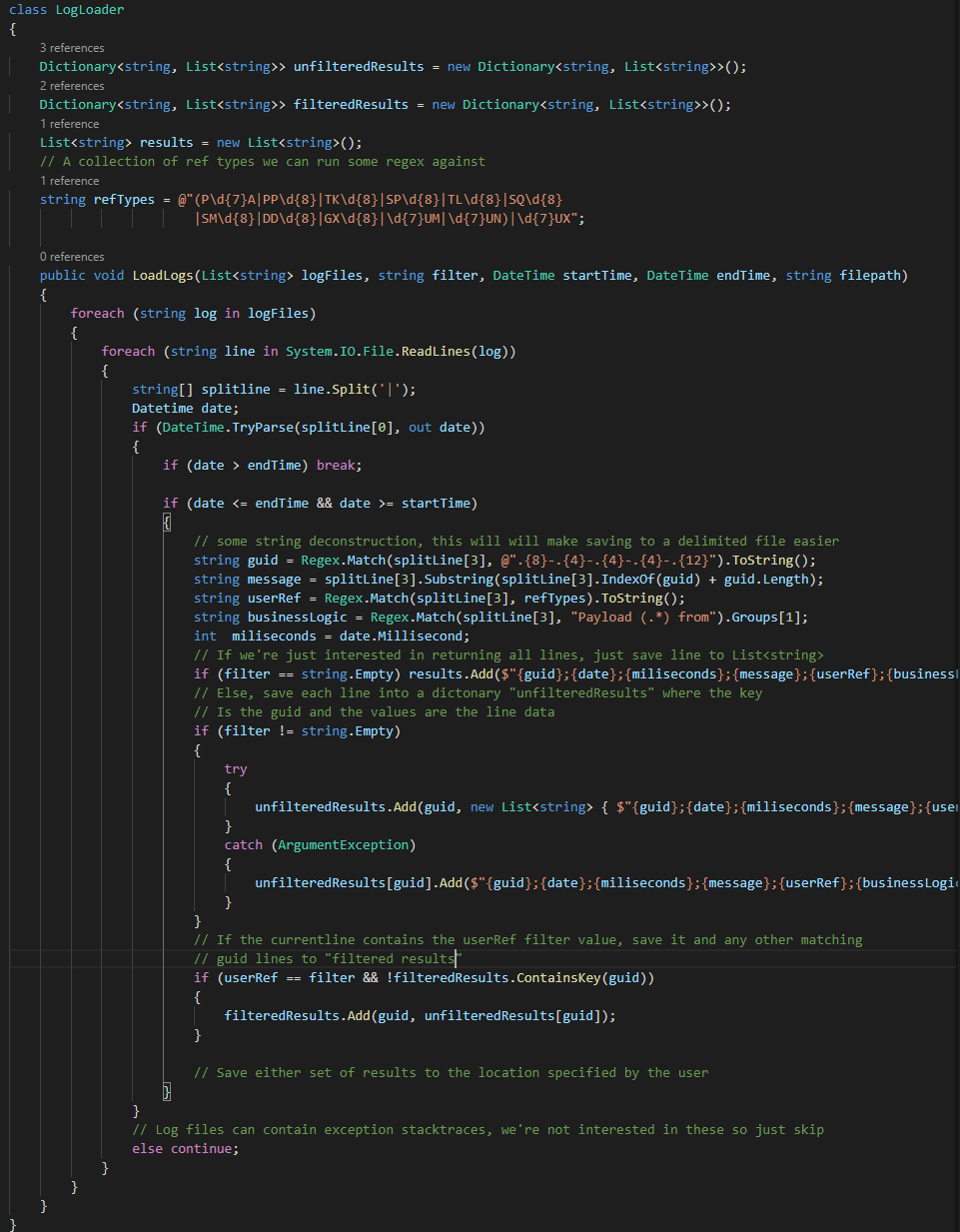

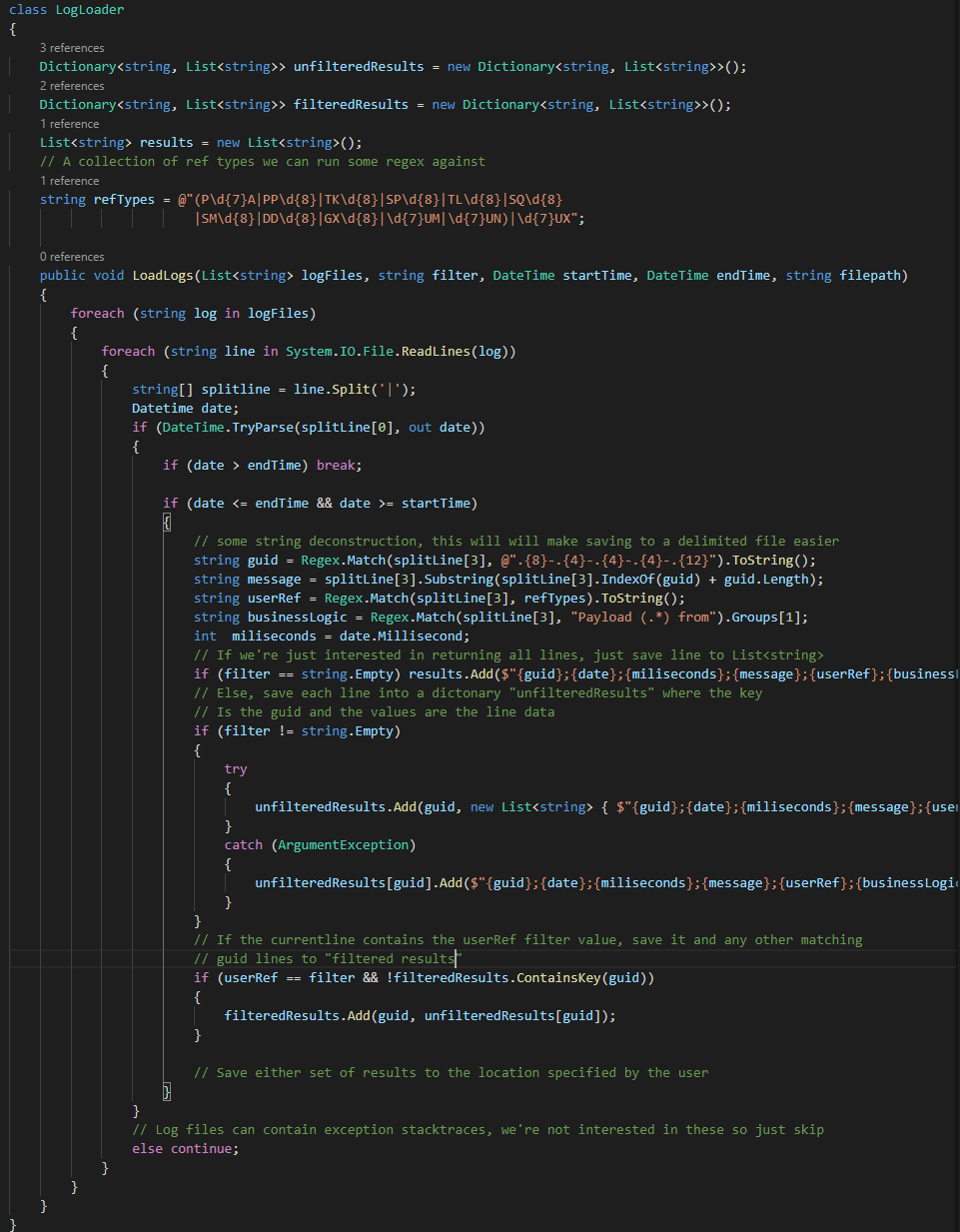

Theses nested loops shouldn’t be too difficult to understand together with the notes, and at this point I can start filling out some of the main logic.

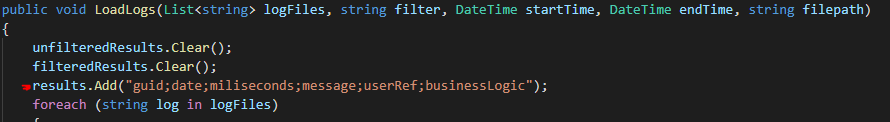

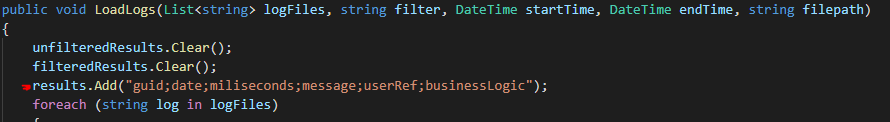

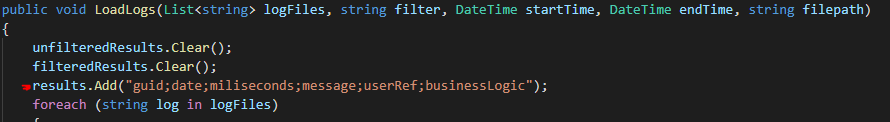

You can see in the new notes that I’ve realised that if I’m going to save to a delimited file format, I’ll need to do some string deconstruction to grab the data I’m interested in and add the delimiters in myself. I’ve opted for semicolons instead of commas because they don’t appear in the log data as far as I can tell.

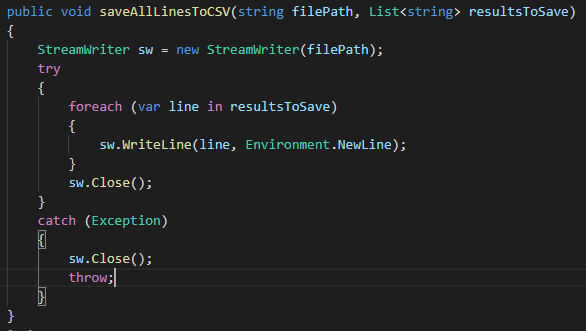

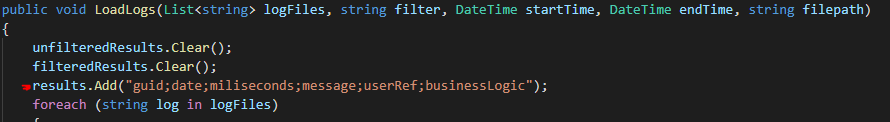

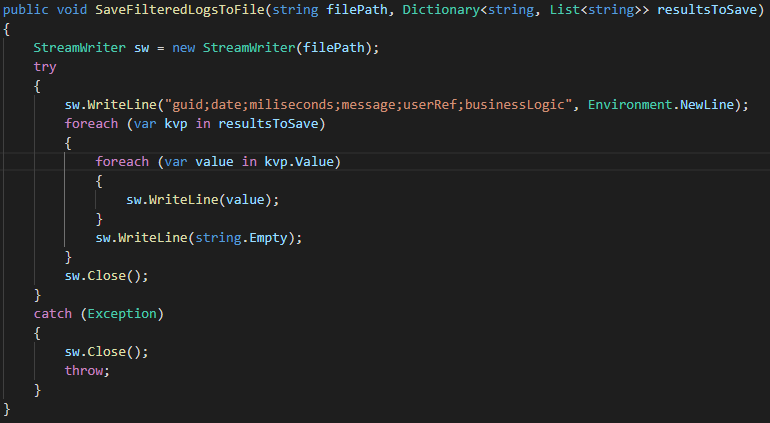

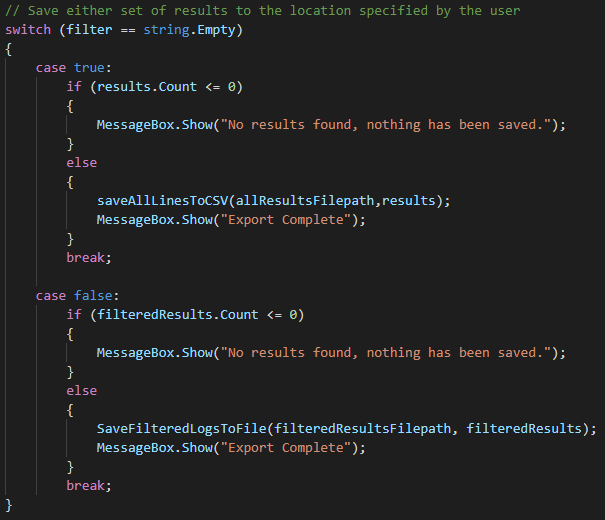

Next step is saving the data, this method is getting a big already so I’m going to create two separate methods that will deal with the two different save types.

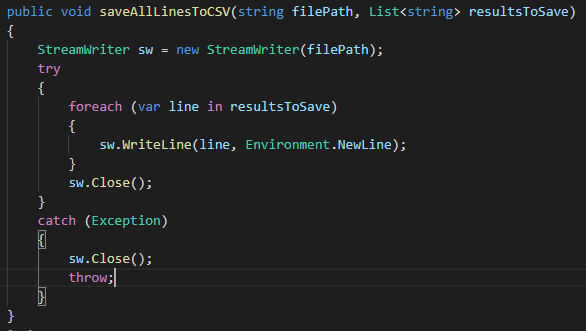

This one just uses a StreamWriter to save each element of the full results list to a new file. To make things simple

FYI – I’m adding the CSV header row into the list before anything is written right at the start of the method.

Note: Remember to .Close() your streams!

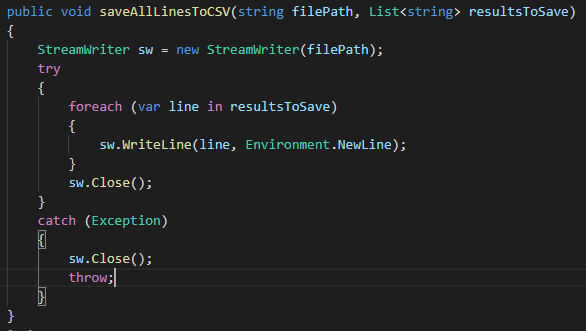

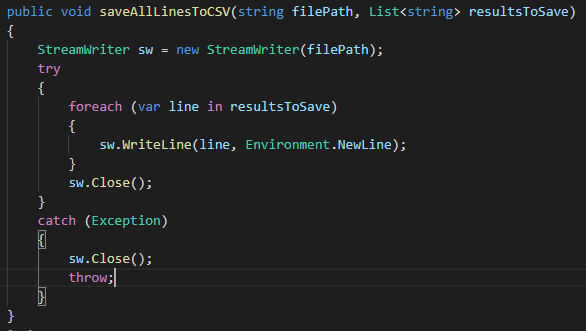

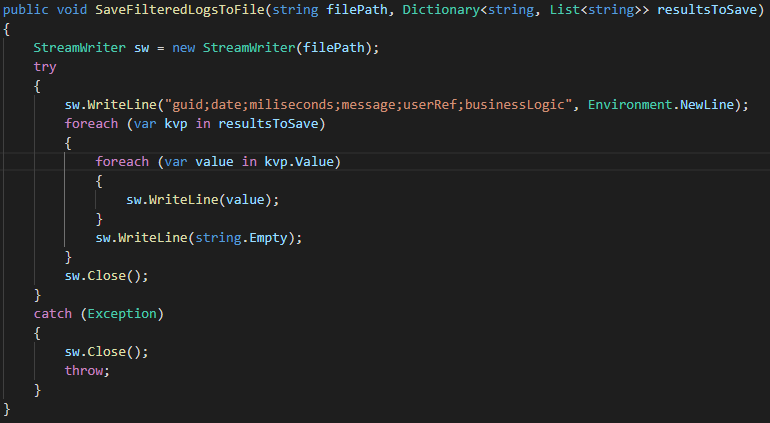

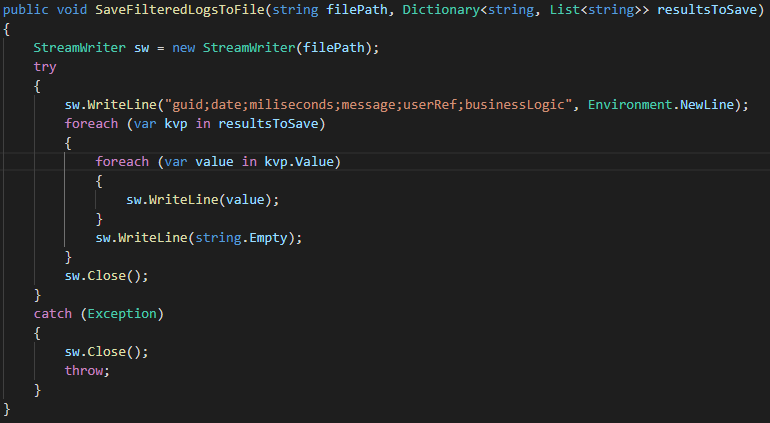

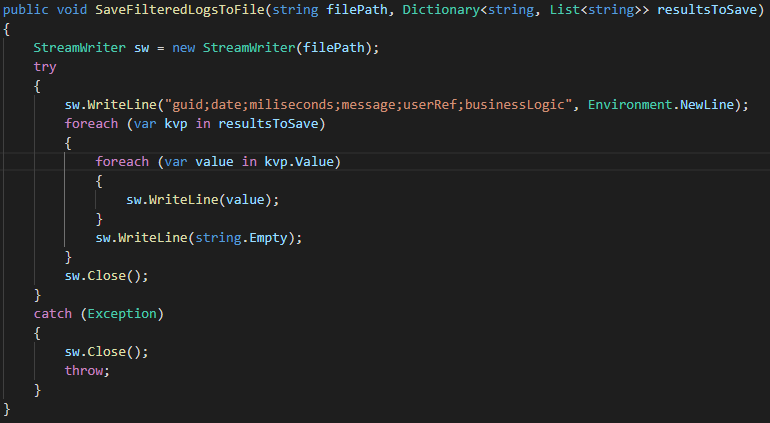

This second method saves the filtered results. As we’re reading a dictionary instead of a list the implementation needs to be slightly different.

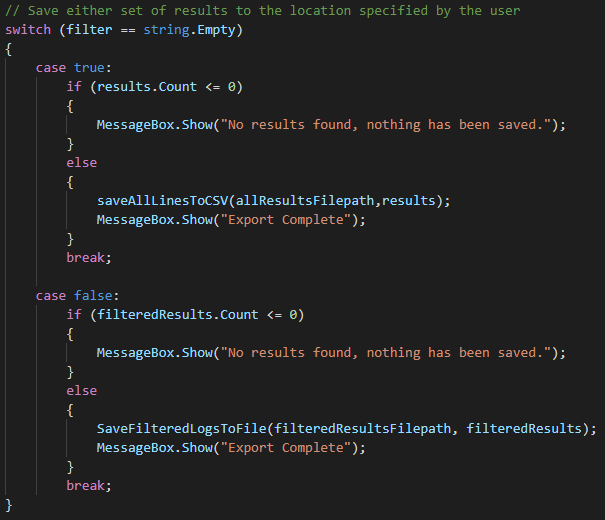

And finally, I add in a switch statement into the main method to pick a save method based on the request

At this point I was using the application as a command line tool. While it was performing faster than my previous PowerShell script and giving me much nicer grouped output, I wanted to share this with the wider team, I felt that warranted adding a user interface.

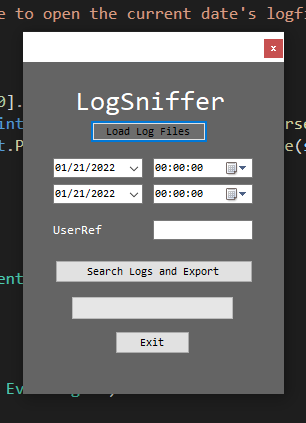

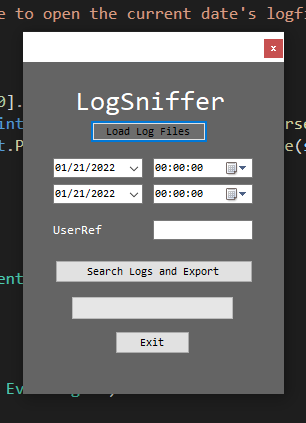

I’m not going to go into too much detail on how I set up the WinForms GUI as it’s all fairly intuitive inside visual studio. First, I mocked up what I wanted the app to look like, nothing too fancy:

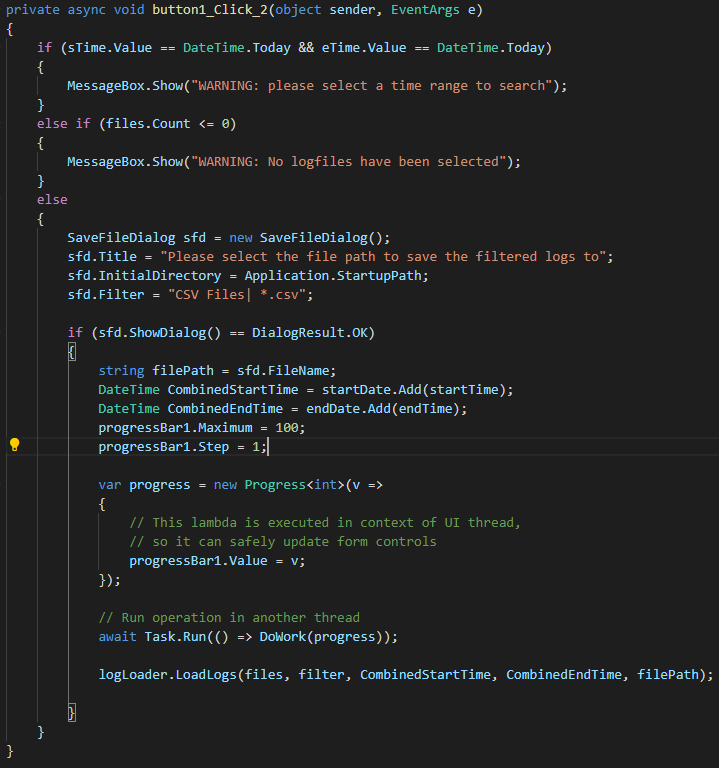

Then I hooked up all the buttons’ methods and built in some basic safety controls.

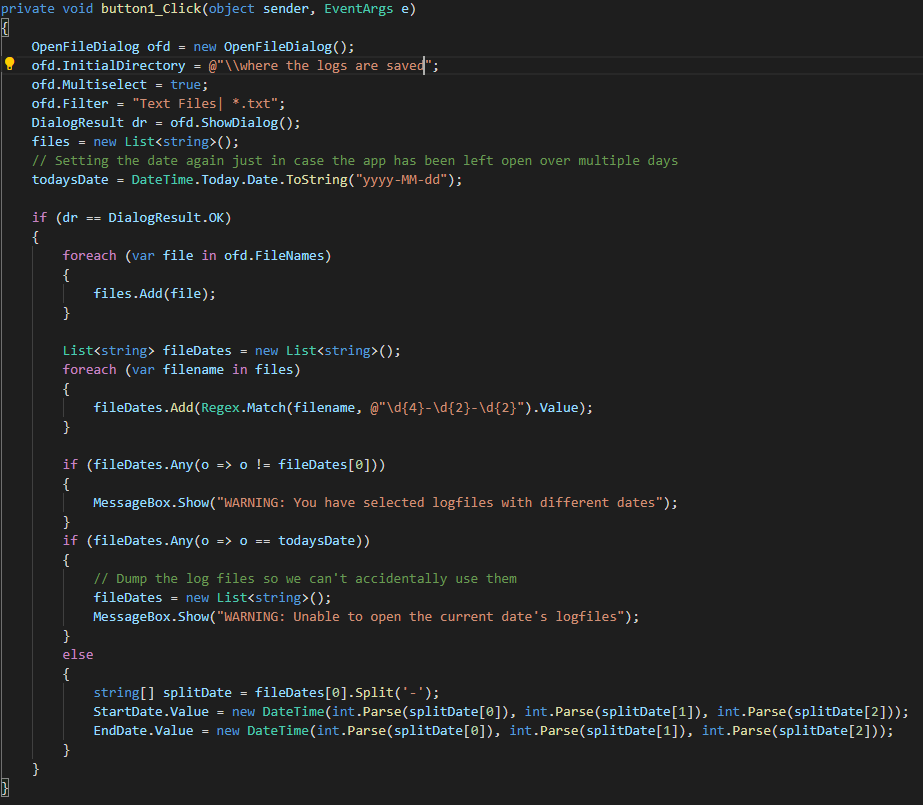

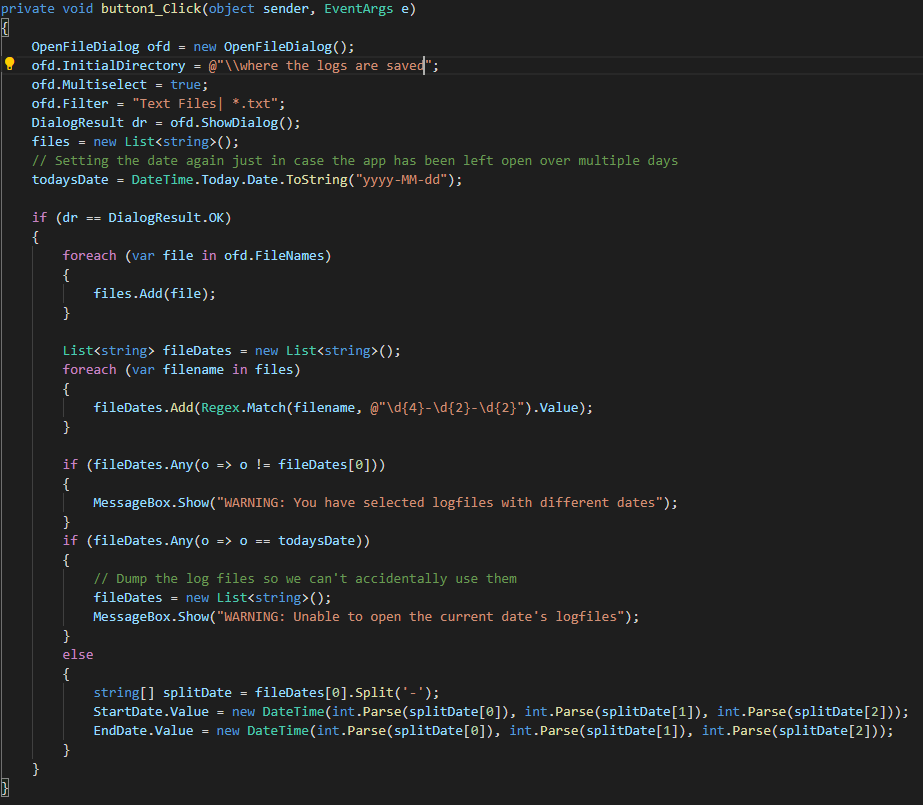

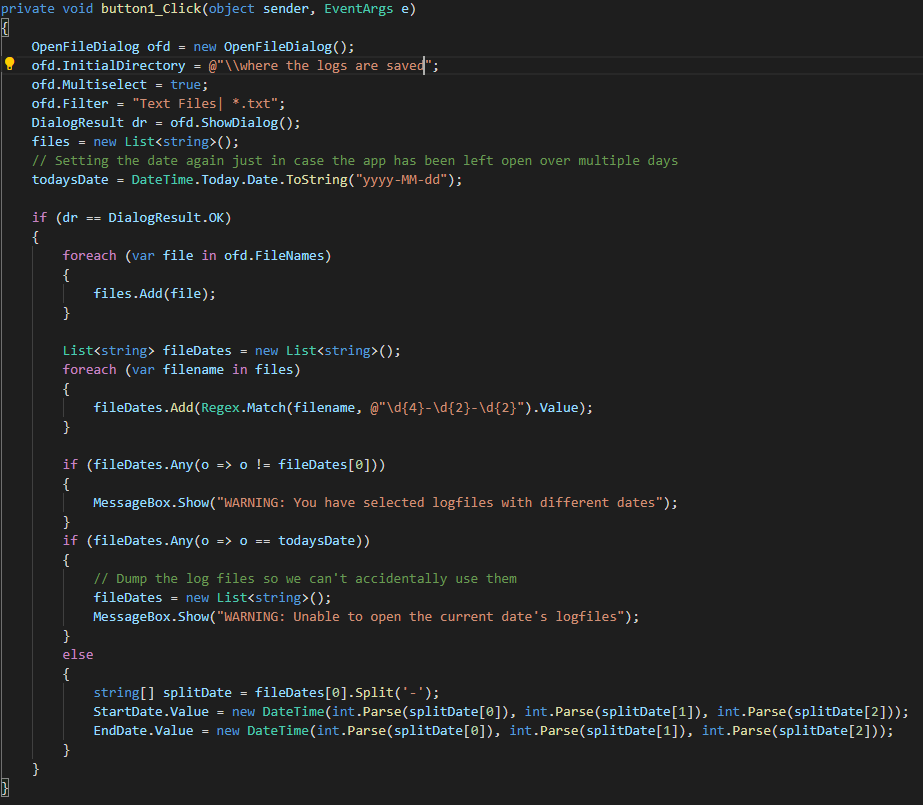

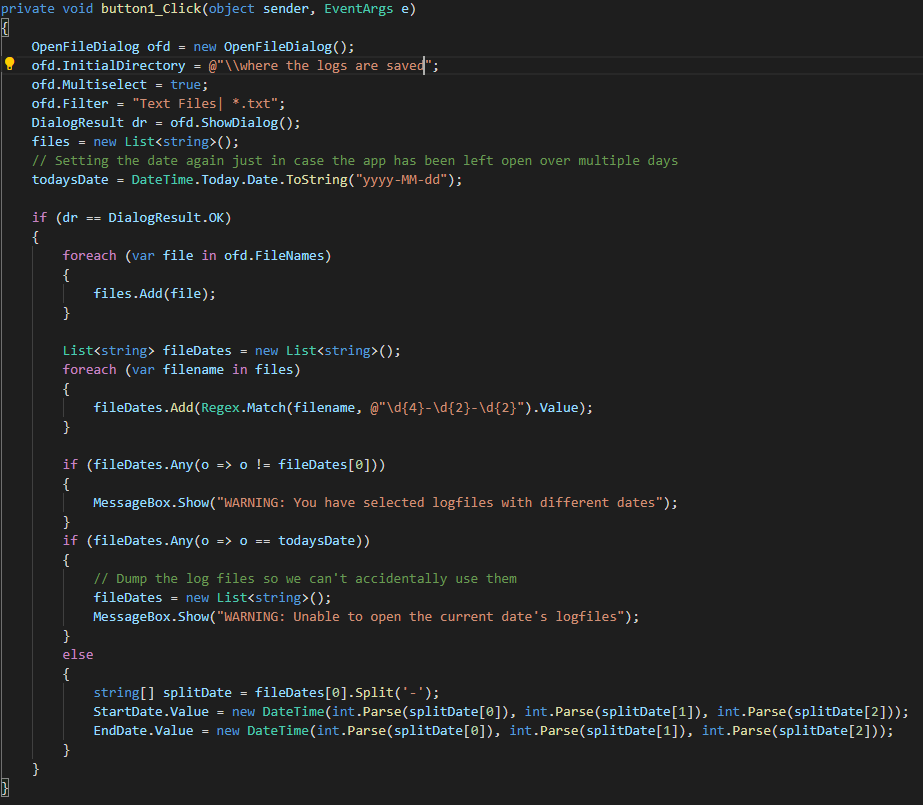

The Load Log Files button code:

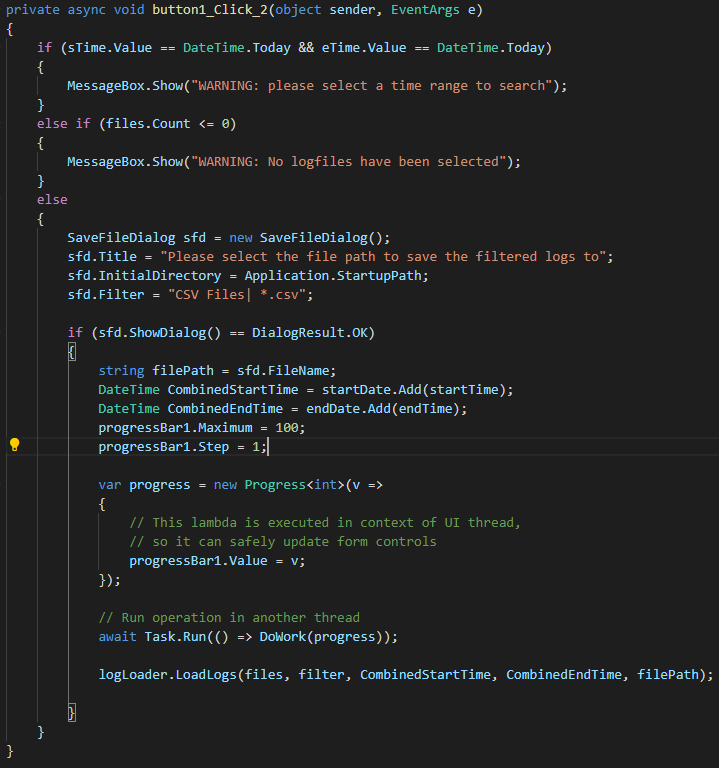

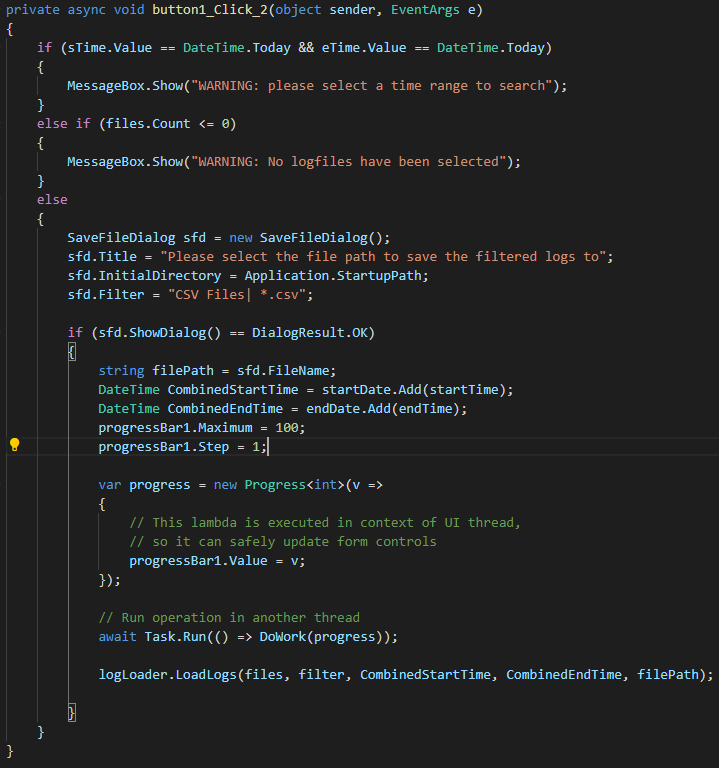

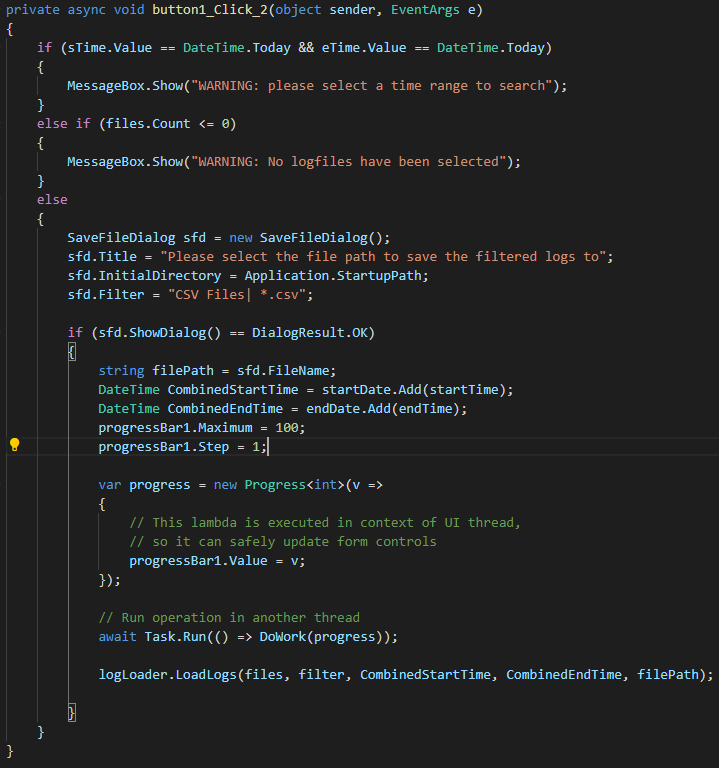

The Search Logs and Export Button code:

I’m not much of a designer but happy with the way it looks and performs.

Conclusion

I hope you enjoyed following along with my thought and development process. This was the first proper dev tool I’ve built, and I think I’m hooked! I’m planning to demo it to the rest of the dev team this week, and I’m expecting a positive reception based on how many times I’ve heard people groan when asked to check these log files.

The whole process has given me a chance to try something new, practice my rusty Regex skills and it’s also sped up my workflow from glacial to absolutely rapid. Having the data so neatly ordered has also revealed several root causes of our response time issues which I’m sure is going to please my PM Monday morning.

Do you have any processes or workflows that you feel are far too tedious, why not build your own dev tool to enhance your productivity?

Good luck!